LEION HEY

Apr 2021 - Dec 2023

LEION HEY is designed dedicate for next generation’s communication experience. These pair of augmented reality glasses translate voices into captions, and visualize sounds with various of motion graphics. They help people to translate foreign languages into native ones, and provide visualized hearing aid services to dedicate group in a way never before.

Contributions

Project management, software product definition, market analysis, user research, UX and UI design, system architecture design, branding strategy, marketing events

Design&Developing Tools

Figma, Adobe After Effects, Xmind, SciChart WPF 3D Chart

LEION HEY Augmented Glasses were issued as “The Top 10 Global Science and Technology Innovation Awards” by UNESCO (United Nations Educational, Scientific and Cultural Organization) Netexplo Innovation Forum on April 13th, 2022.

THE DEMO INSPIRED US

In 2015, during the early stages of AR exploration, the idea came to us that we should use augmented reality glasses as a language translator. However, key technologies were not ready then: we needed lower latency Automatic Speech Recognition (ASR) and translation engine, lower power consumption processor, and better AR displays.

In December 2020, we found that these technologies had made huge progressions, and it was worth verifying again if releasing an AR translator to the market was a good move. We spent 3 days creating a 'quick and dirty' demo based on our existing hardware: the microphone array captured an audio stream, which was then sent continuously to the ASR and translation engine online via WLAN.

Although we were using previous generation’s of AR hardware (bulky and tethered), the result was still very impressive. International students, tourists, and hearing impaired people showed strong interest in purchasing the product. This feedback inspired us to do some further more researches.

Researches

5 months of researches were implemented before diving into the details of making the actual product, these researches include:

Analysis the market size of hearing aids and translationso, competitors and their product

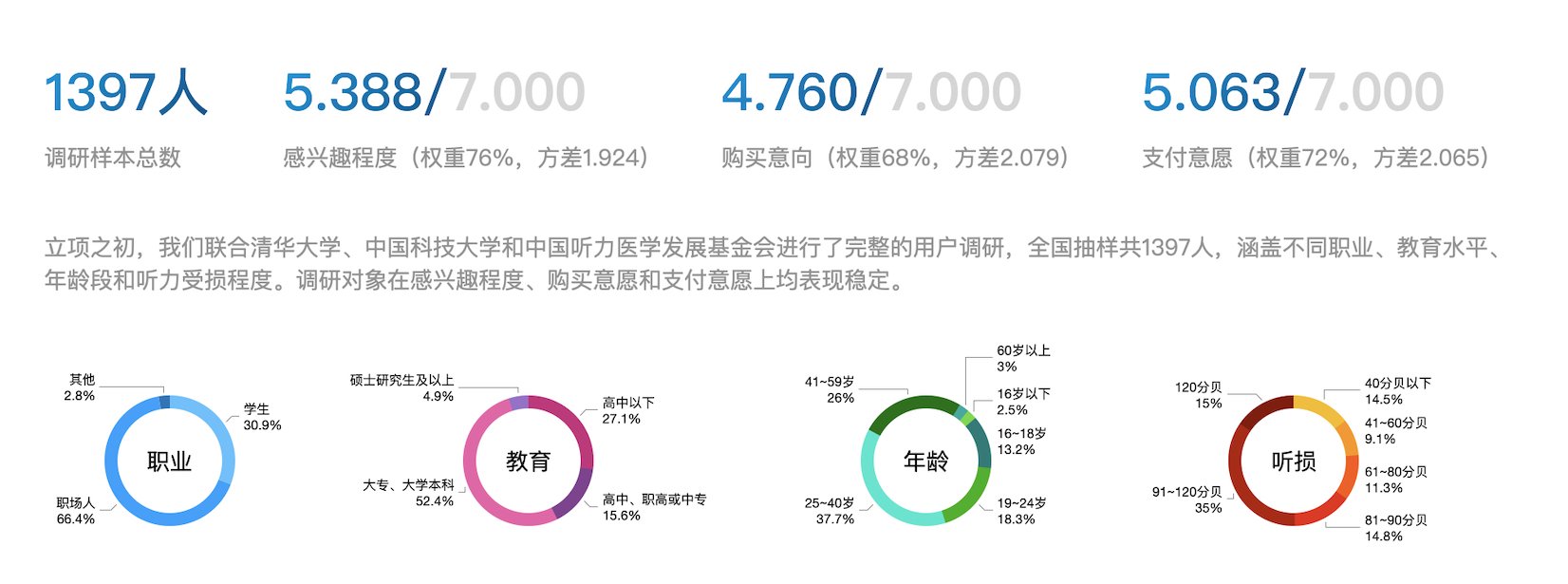

2000+ online surveys (buyer’s intentions)

400+ hours of focus group interviews

4000+ hours of field tests (cognition, )

50+ hours of expert reviews

The result was very promising.

The score of interests (5.388/7.000), intentions (4.760/7.000) were significantly high.

AR glasses helps people to perform 30~50% better in terms of situational awareness.

Autonomy, relatedness, competence, know the self, communicative avoidance, cognitive styles are improved significantly as well.

The report also indicated proper price range, form factor, weight and user’s persona.

Defining The Product

Product define is accomplished based on research findings - “Fast and accurate communication experience, straight out of the box”.

01 Accurate and instant translation

Accurate speech recognition and semanteme display

Accurate sentence segmentation

Make translation result displayed as quick as possible

02 Straight out of the box, easy to use

Easy to pair with smartphone and connect with WLAN

Start/End translation with just one click

Works without smartphone

03 Continuously use for 1 hour

Make captions comfortable to read

Make the power consumption as low as possible

Stable services required

04 Capable for different scenarios

Be able to reduct noises

Be able to recognize accents and keywords

Switch between near and far field audio capturing capabilities

05 Low cost

Save cellular data

Compatible with more affordable voice recognition engine

Compatible with conventional smartphones

06 Make users stick to the product

Updates with new attractive features every month

Bring in gamification and social comparison

Notify users about operating activities and product information

Kick-off with limited Resources

This project started with only 10 people, this super small team includes 1 Electronic Engineer, 1 Optical Engineer, 1 Mechanical Engineer, 4 Software Developer, 1 Software QA Engineer, 1 Marketing Specialist, 1 Product/Project manager and UX/UI Designer (which is me).

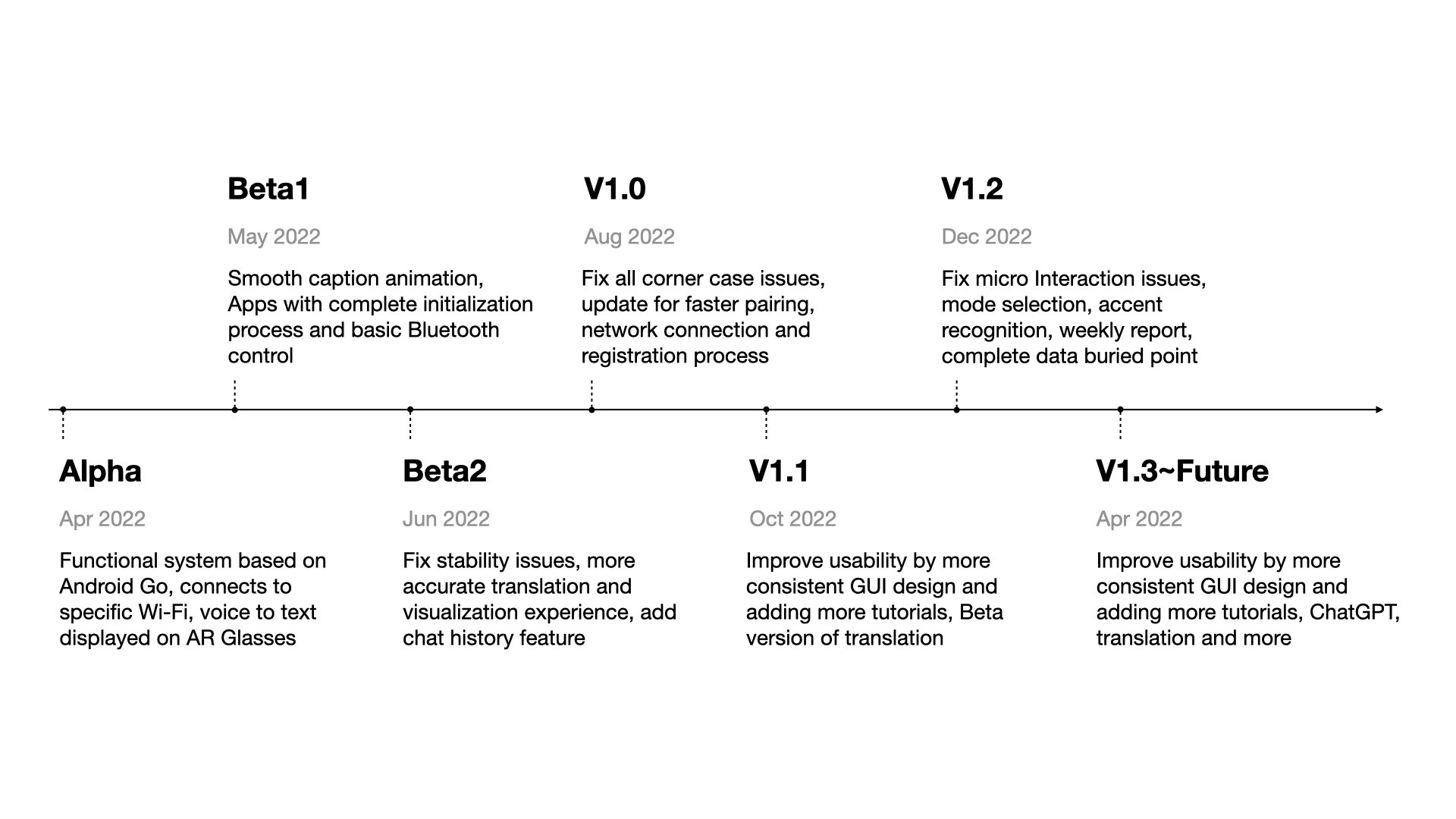

We quickly setup the roadmap, and decide to release the Beta version for demonstration within 3 months.

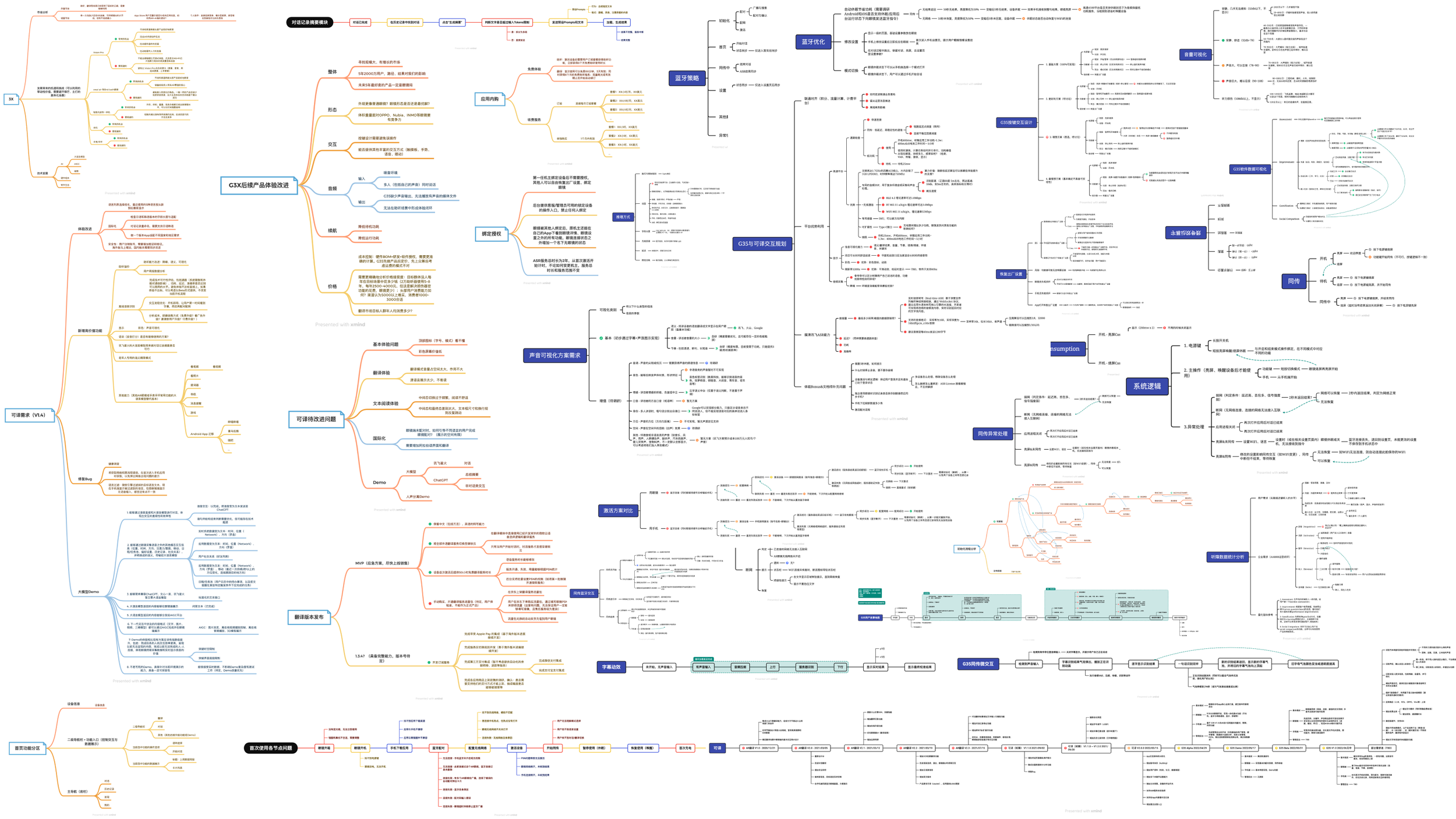

Architecture Analysis

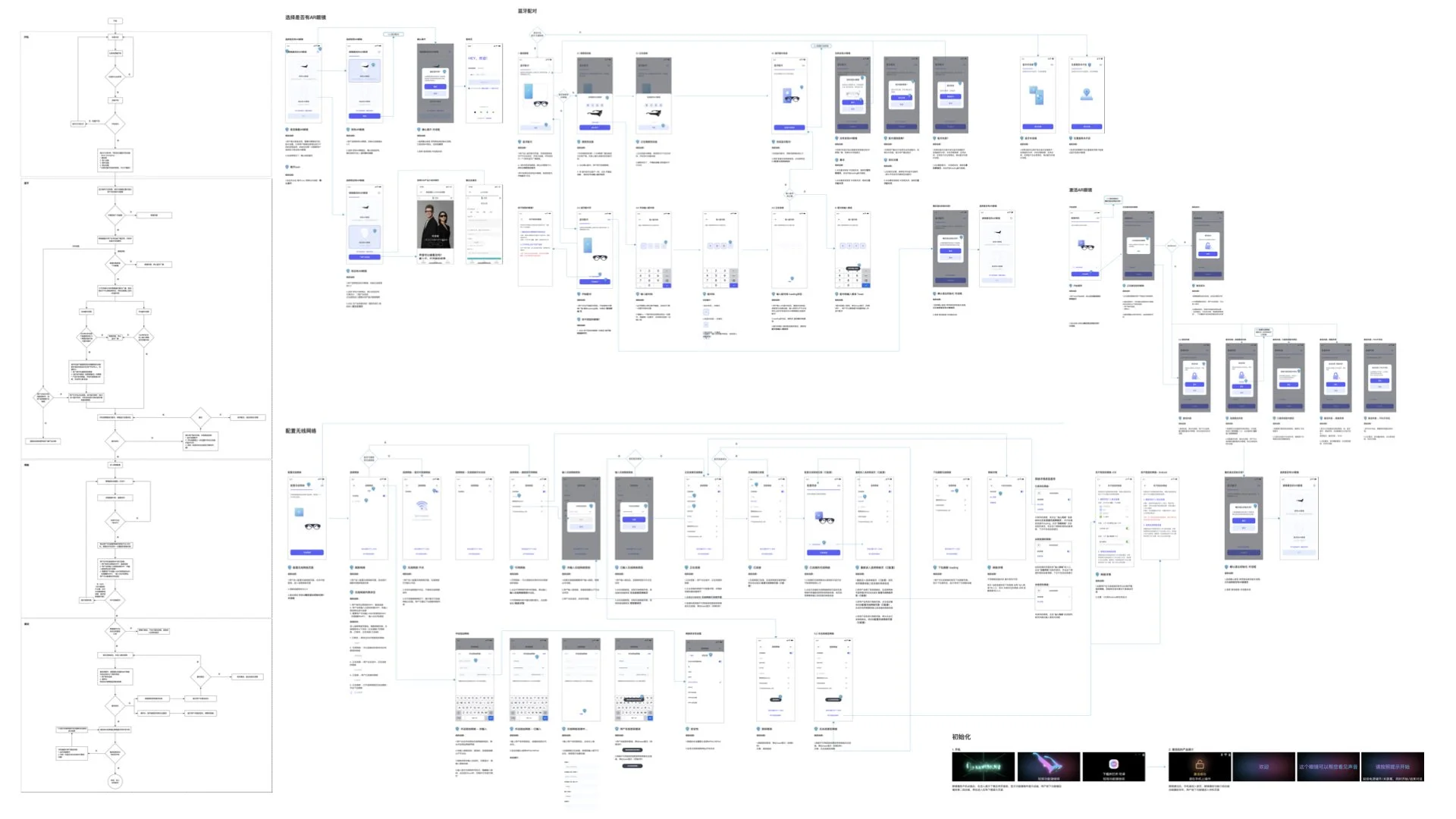

Through a comprehensive analysis from various dimensions and by integrating user story maps, we carefully examined foundational requirements, value-added services, Bluetooth and WLAN communication, power optimization, button and voice interactions, data tracking, and other modules. We formulated rules for regional services, in-app purchases, and offline/online algorithm collaboration. Additionally, we designed a software architecture that is low-power, efficient, and capable of accommodating the primary upgrades expected in the next two years

Accurate And Instant Translation

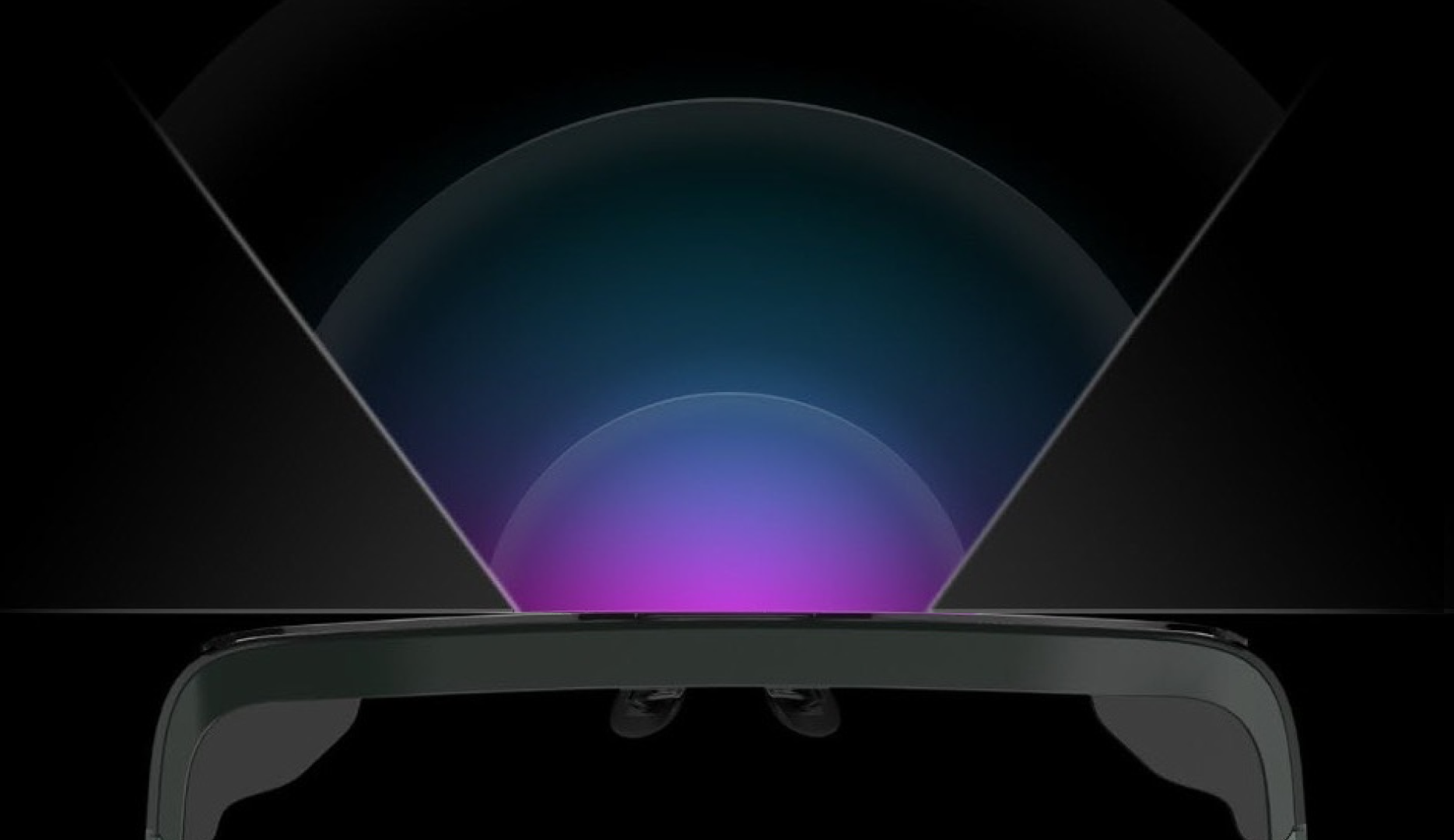

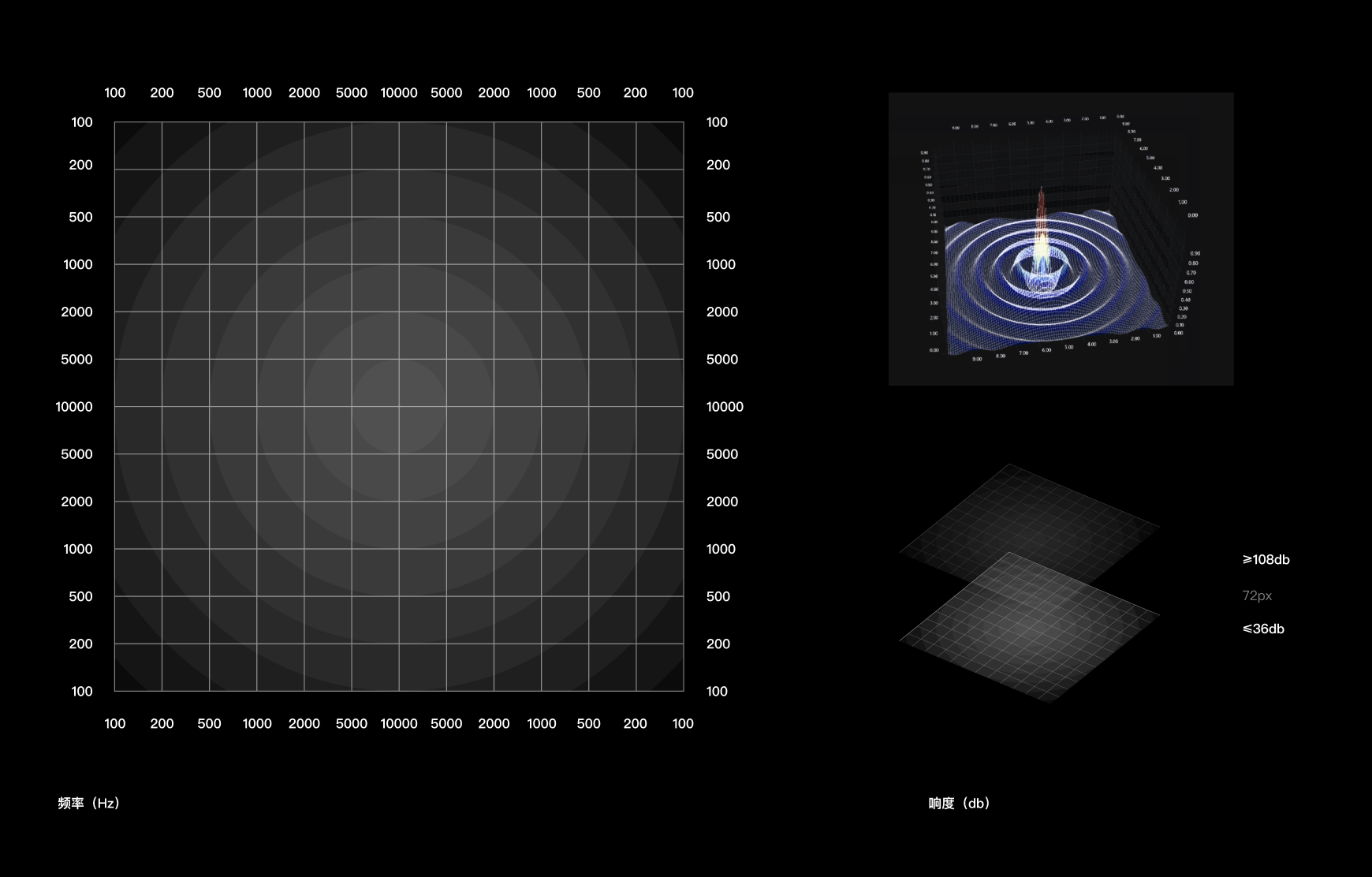

60° 10db

However, achieving excellence in product experience requires more than just noise reduction and ASR engine accuracy. We need to match captions with speech rate, pauses, and punctuation patterns too.

To ensure real-time translation, AR glasses transmit the current live speech in segmented form to an online ASR engine. The fastest possible translation of the current sentence is returned before the entire sentence is completed.

To ensure translation accuracy, when the AR glasses detect the completion of the entire sentence, they request the ASR engine to perform a semantic recalibration of the entire sentence. Additionally, the speech translation results received by the AR glasses are displayed as subtitles based on semantics and intonation, aiming to visually replicate the pauses and sentence breaks during user speech as much as possible.

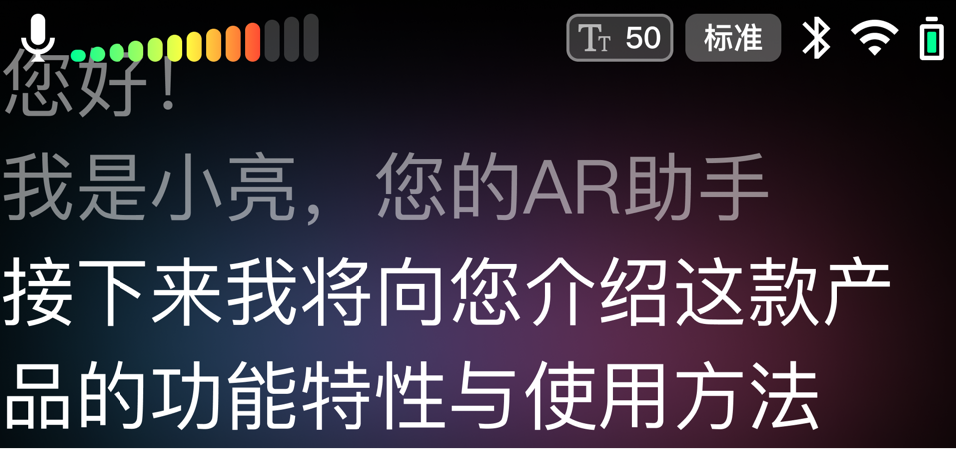

The current sentence will be highlighted to distinguish it from previously translated sentences.

Apparently, to achieve a high-precision translation experience, it is essential to employ accurate speech recognition and translation engines, outstanding noise reduction algorithms. However, we are still far away from ideal AR experience. Compromises need to be made while still maintaining the usability.

After weeks of researches and lab simulations, we decide to offer the front microphone more priority to capture sound comes from the front in 60° field of view horizontally, while sound comes from other directions will receive a 10db more noise reduction from the algorithm. In this case, users can get relatively accurate sound capture capability just by pointing the head to the person whom is speaking, and get translation result instantaneously.

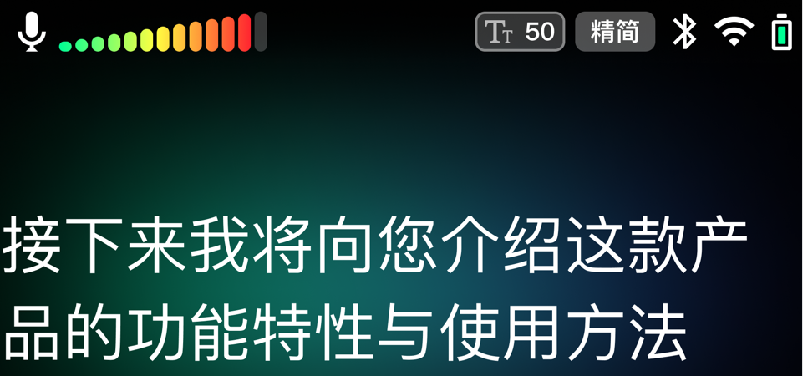

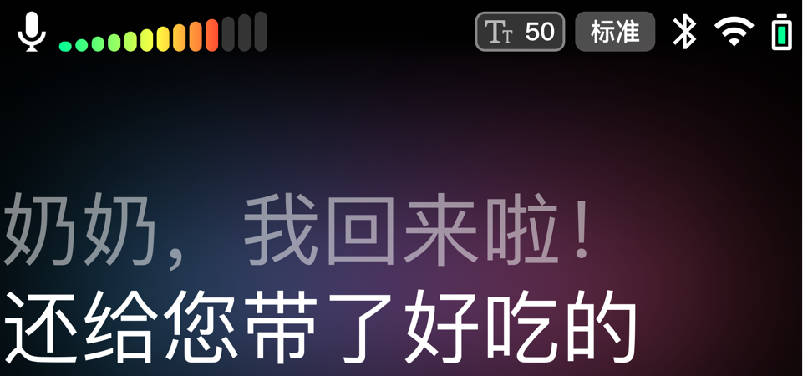

Comfortable To Read

In the research process, we identified that a key factor for broader user acceptance is to enable users to easily use the product for at least one hour, meeting the basic requirements for activities such as attending classes, meetings, and watching programs. Therefore, we carefully analyzed three factors related to reading comfort: text box width, font size, and subtitle scrolling animation.

Firstly, in augmented reality, we should define text using the FOV rather than pixel count. Combining anthropometric data, we ultimately restricted the AR glasses to display text within a 20° FOV horizontally. The translated caption font size ranges from 1.2 to 2.0° FOV.

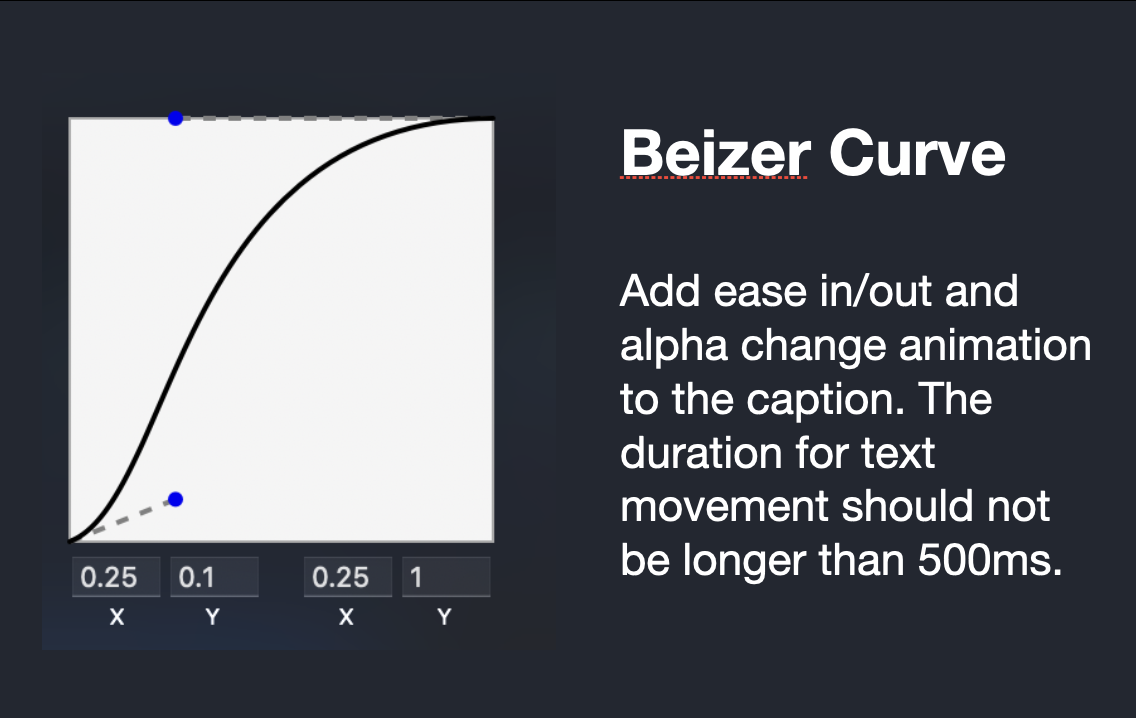

Additionally, to prevent jarring caption jumps that could cause visual fatigue for users, we introduced easing animations based on Bezier curves for the scrolling of AR captions. This means a slow acceleration at the beginning of scrolling and a gradual deceleration as it reaches its destination. This creates a pause effect when a new sentence is translated, significantly alleviating user fatigue.

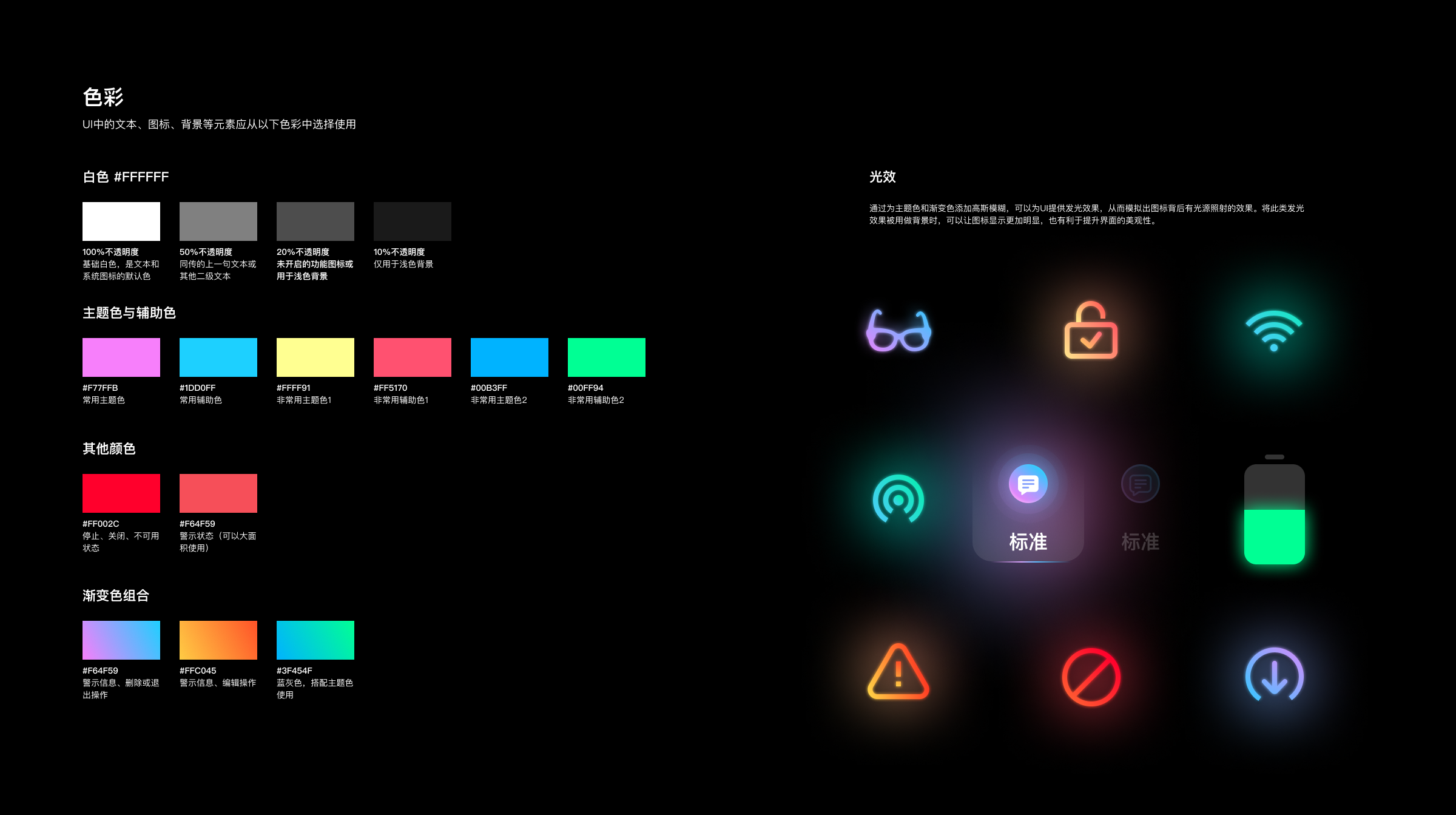

Visual Identity

Surveying the world around us, auditory perception can capture a vast amount of information, rivaling the richness of visual information. Considering that the essence of LEION HEY AR glasses is to break down language and cultural barriers, allowing everyone to perceive the diverse and vibrant world, we incorporated a theme of colorful diffused light into the visual identity. This theme symbolizes rich information and augmented reality.

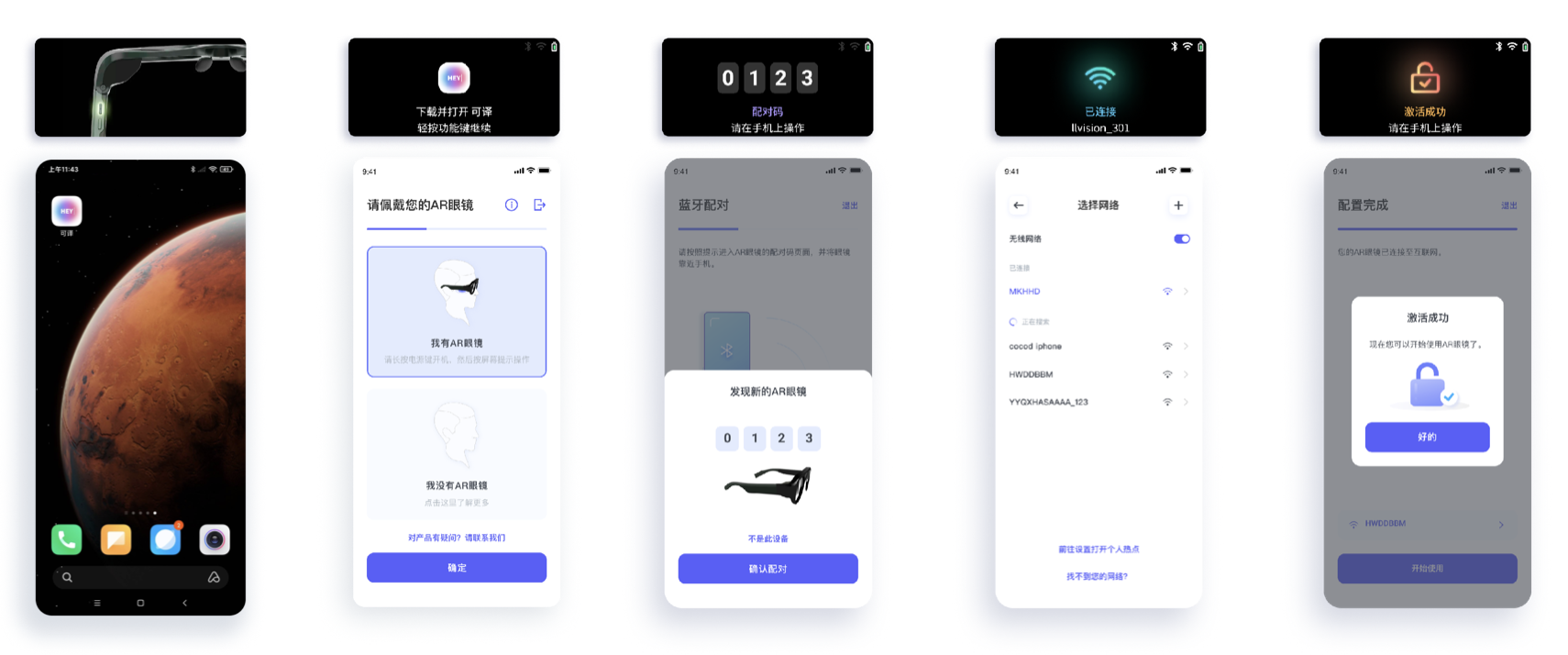

Quick Initialization

Our early user research and expert reviews indicated that the ability for users to quickly experience a product's core functionality upon unboxing (seeing translated subtitles and visualized sound) is a critical factor in shaping product reputation. It was clear that we needed to prioritize optimizing the AR glasses' boot-up time. By trimming unnecessary functions from the Android Go system and limiting auto-start processes, we managed to reduce boot time to around 25 seconds.

However, for first-time users, the processes of pairing the AR glasses with a smartphone, connecting to Wi-Fi, and activating the glasses required further streamlining. Considering both user experience and technical feasibility, we set a target of less than 2 minutes from power-on to the user seeing subtitles.

Firstly, we optimized the Bluetooth pairing process. As a crucial function for remote control of language selection, user interface settings, and data transfer, the AR glasses needed to pair with the smartphone as quickly as possible after booting up. Since smartphones often turn off Bluetooth searches after several tens of seconds due to power optimization strategies, we needed to ensure the AR glasses broadcast for as long as possible and wait for the smartphone to search. After booting up and completing the tutorial, the AR glasses would pause on a Bluetooth pairing screen, broadcasting for 10 minutes and displaying the pairing PIN to ensure users could download the mobile app, register, and reach the pairing screen. Additionally, we streamlined the pairing process for user convenience. The smartphone would automatically detect the strongest Bluetooth signal within 2 seconds and display the device ID on the screen, eliminating the need for users to repeatedly click buttons and enter PINs manually. For scenarios with multiple AR glasses, such as classrooms for the hearing impaired, users could directly enter the PIN displayed on their AR glasses to complete the pairing.

Secondly, we simplified the process of connecting the AR glasses to the mobile internet. In addition to leveraging Apple MFi certification for direct connection to mobile hotspots, we also allowed users to save frequently used Wi-Fi SSIDs and passwords under their accounts.

System Efficiency

In addition to accurate translation, comfortable reading, and visually appealing design, LEION HEY also requires a highly economical and efficient system.

Firstly, we stagger tasks such as Bluetooth, WLAN, audio compression, and display refresh to avoid simultaneous operations that could lead to peak power consumption. This prevents the AR glasses from experiencing sustained, rapid accumulation of high temperatures.

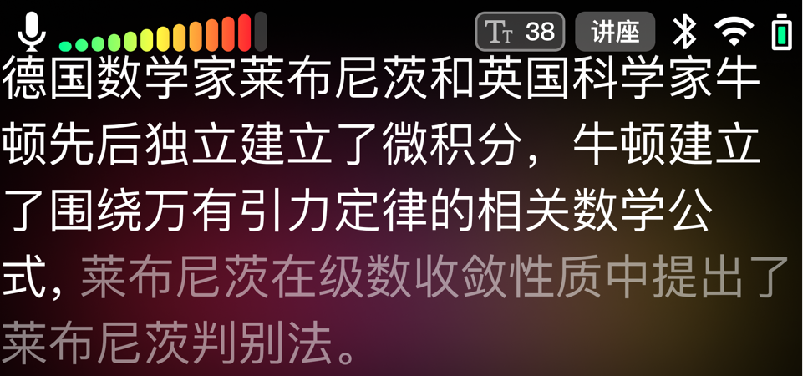

Secondly, to conserve power and achieve a continuous usage time of 2 hours, meeting the demands of typical scenarios for half a day, we optimized the display on the screen, which consumes a significant portion of the device's power. Features like volume indicators, background color switching, and icon status changes are displayed at refresh rates of 1~5Hz. For captions that require smooth scrolling, we use a high refresh rate of 60Hz only when the subtitles are in motion. The interval for sending voice segments from the AR glasses is limited to every 80ms to reduce network request frequency. Additionally, when there is no voice input for an extended period, the AR glasses automatically enter sleep mode.

Finally, cost savings for consumers are also a focal point in optimizing system efficiency. After repeated testing, we determined that sending compressed OPUS audio to the server, with a 16KHz 16Bit sampling rate, is effective for speech recognition. The size of this audio is only 1/8th of the raw file, preserving sufficient detail for recognition, and the compression algorithm minimizes resource and time wastage.

It’s More Than Just Captions

We offer different subtitle display modes for various usage scenarios. For example, in conversation mode, subtitles refresh from the bottom of the AR glasses to avoid obstructing the other person's face and body movements. In lecture mode, subtitles strive to avoid line breaks and refresh from the top, freeing up the lower field of view for note-taking convenience. Additionally, for the elderly population, the AR glasses can enlarge the font size and streamline translation results' semantics in real-time using AI algorithms, enhancing readability.

LEION HEY AR glasses also come equipped with a range of background colors. When combined with the results from the server's speech recognition engine, subtitles for positive emotional speech are displayed with warm-colored backgrounds, while negative emotional speech is complemented with cool-colored backgrounds. Moreover, these backgrounds aid users in reading subtitles in complex environments, such as using AR glasses in settings with plants, cables, or other environments with intricate lines

During conversations, the AR glasses display a volume bar in the top left corner of the screen. It visualizes the common range from 18 to 96db encountered in daily life, with each segment representing 6db, which means that as the distance to the sound source increases or decreases by a factor of 1, the volume bar will correspondingly increase or decrease by one segment. The green zone represents very low volume (such as whispering or ambient noise in quiet conditions), the yellow zone indicates a moderate volume (like speaking loudly), and the red zone signifies loud noise (such as near a car engine or a power drill). Users can quickly adapt to this visual representation and gain an intuitive sense of changes in volume.

We applied Virtualizer class from Android AudioEffect and Realtime 3D Surface Mesh from SciChart WPF Charts to develop these audio visualization effects. These animations vividly illustrate the characteristics of sound across various frequency bands. Our users, especially those with hearing impairments, can intuitively assess the current volume, whether the sound is sharp or deep, and the rhythm – all through these graphics.