XR Spatial Interaction

Sep 2017 - Jan 2024

This is the universal interaction solution for LLVision’s XR hardware. The purpose of this project is to deliver comfortable, intuitive, efficient experience to the user.

Contributions

Project management, product definition, market analysis, user research, UX and UI design, system architecture design

Design&Developing Tools

Figma, Unity3D, Spline, Adobe After Effects, Xmind

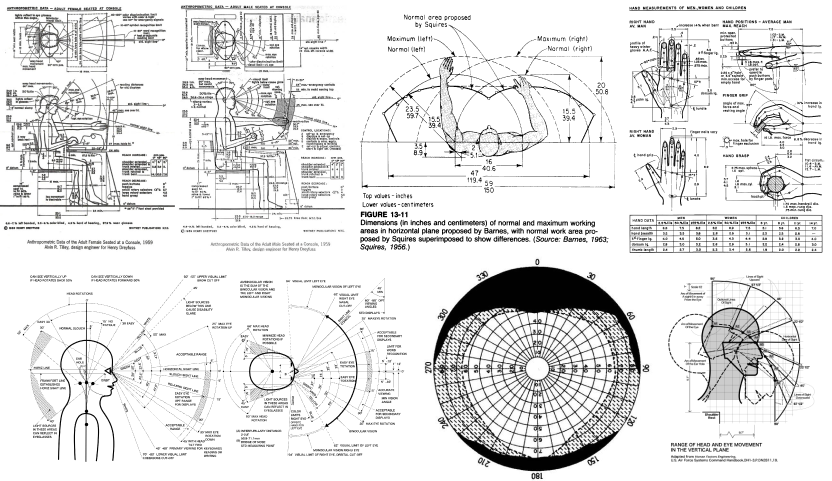

Understanding Human

In order to deliver promising XR experience, we need to understand human factors. Our design team have walked through tons of anthropometry and ergonomics data, before we dived into the details.

Based on the classic theories and the characteristics of our hardware, we figured out the proper XR layout, interaction methods, GUIs and OS optimization guidelines, as well as the hardware specification definition.

Hardware Platform

This project is designed for LLVision’s LEION Pro AR glasses and LEION Ultra AR headset.

LEION Pro

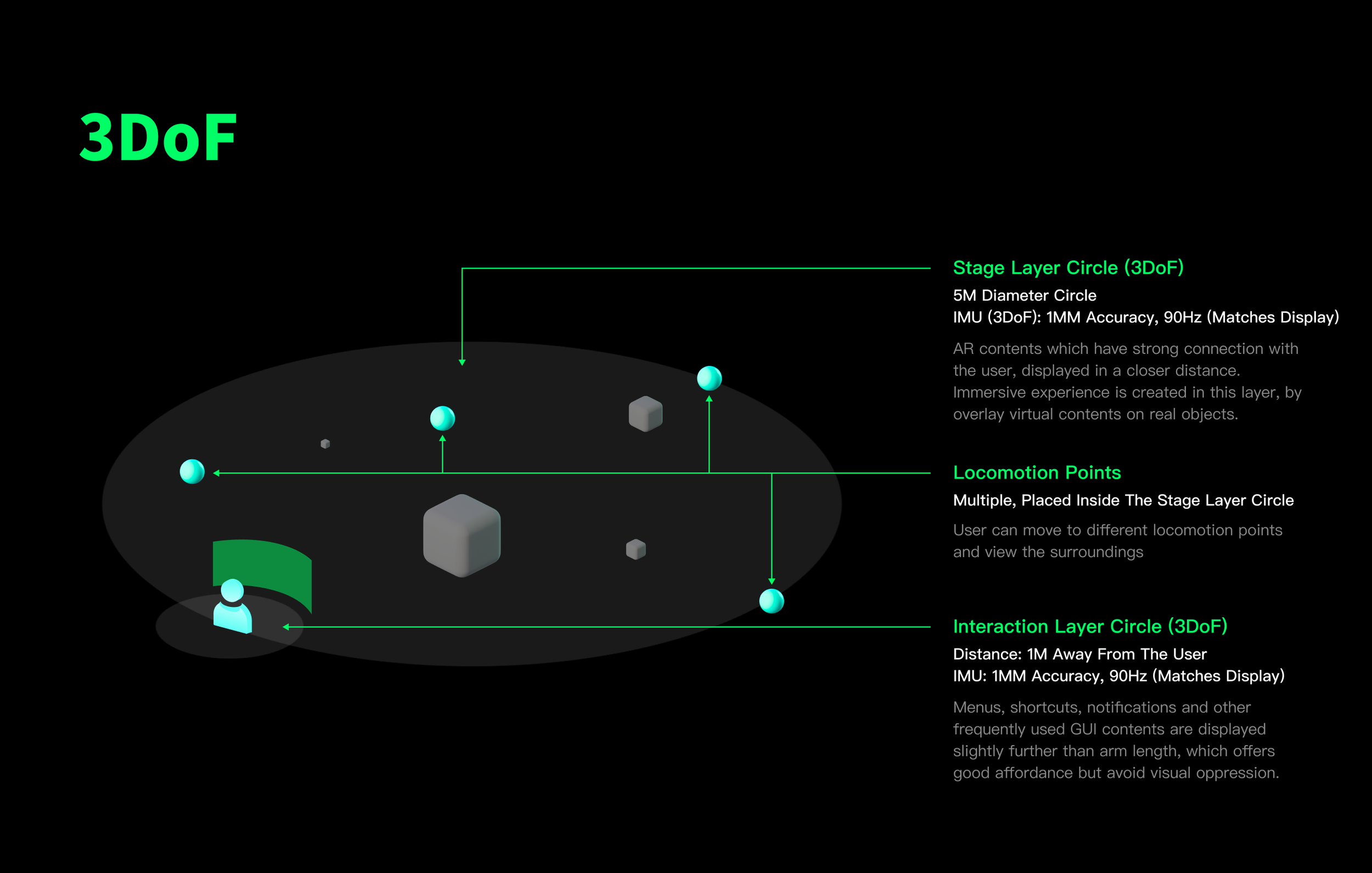

LEION Pro is a pair of tethered AR glasses with binocular waveguide displays, 3DoF, voice command, gesture pose control interactions are supported. QR code, images, objects can be recognized with the combination of main camera and AI chip built-in.

LEION Ultra

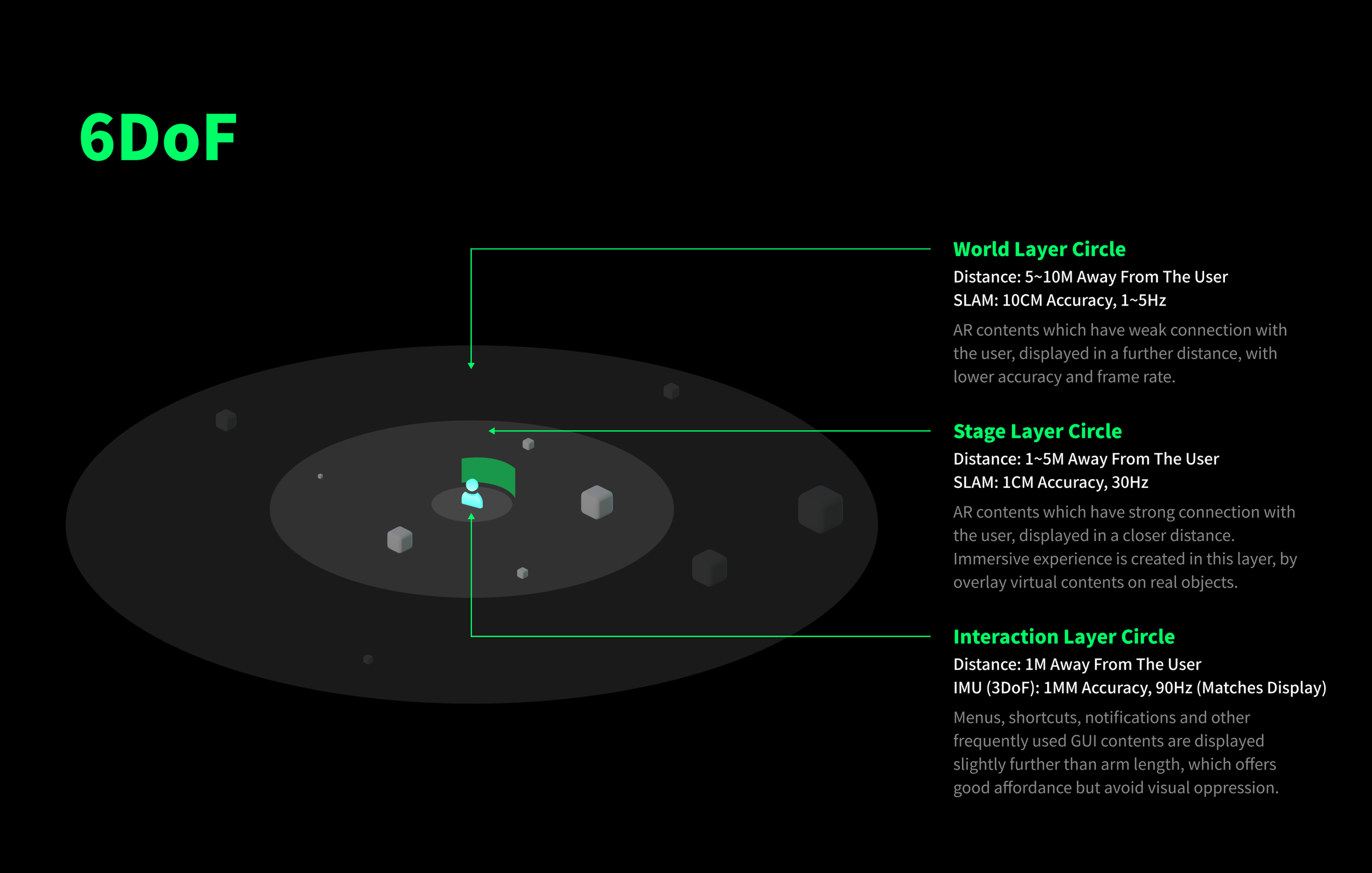

LEION Ultra is an AR headset designed specifically for professional enterprise users. It features 6DoF SLAM, 3D gesture recognition and tracking, voice command, eye tracking, physical dial and intelligent multi-modal interactions. It has binocular waveguide displays and electrochromic filter on the outside.

Spatial Layout

Since we are overlaying virtual contents on the real world, they are the same as real objects in terms of user cognitions.

According to our research:

We placed home screen, menus, feature icons 1 meter away from the user, so our user feels they are controllable without visual tensions

People tend to recognize objects placed within 5 meters have some sort of relationship with them, so high accuracy XR contents are displayed in an area which is 1~5 meters away from the user

Any thing further than 5 meters away, people feel these objects have less to do with them, so contents in this area are only displayed to create immersive experience.

As we have both 3DoF and 6DoF XR hardware, an universal layout makes the products consistent and easy to develop.

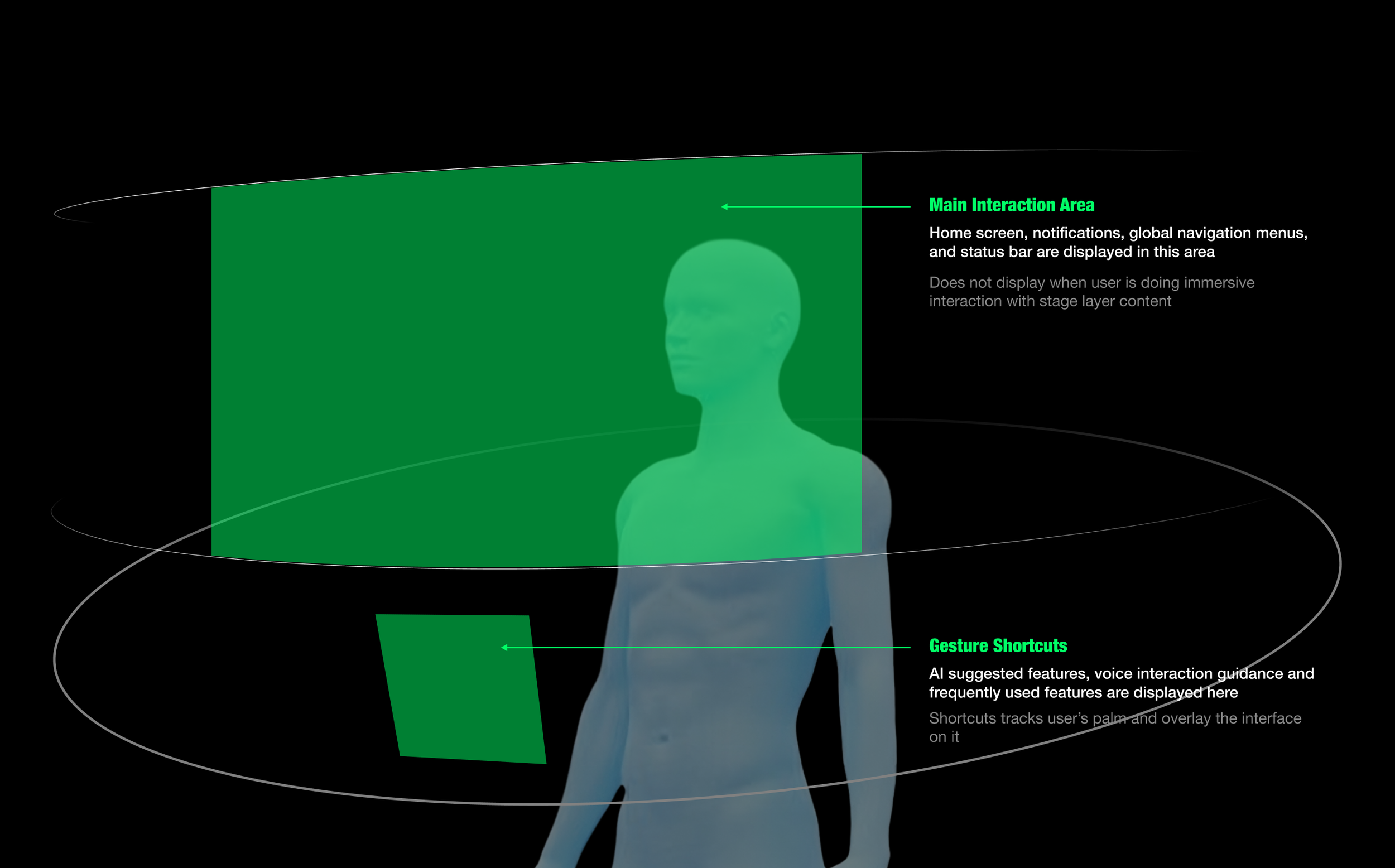

Interaction Layer Canvas

On XR devices, the canvas for interaction layer is based on easy and comfortable head/eye rotation angles.

For observing and quick pointing interactions, contents should be placed within canvas area.

3D Objects in Stage Layer

3D models displayed in the stage layer, while operating procedures, guidelines, attachments are shown as highlighted labels on these models. By aiming to a label with cursor, more details of that area will be displayed on cards or menus.

Optimize 3D Models Performance

Render the model according to ambient lights

PBR materials with metal roughness and specular glossa

Matte materials must be supported

≤100K polygons for a scene

Poisson-disk to triangular surface optimization recommended

3D content animations can be triggered with scripts, triggers can be setup in the scripts

Basic animation: pan, zoom and rotate, scripts include speed, acceleration and loop

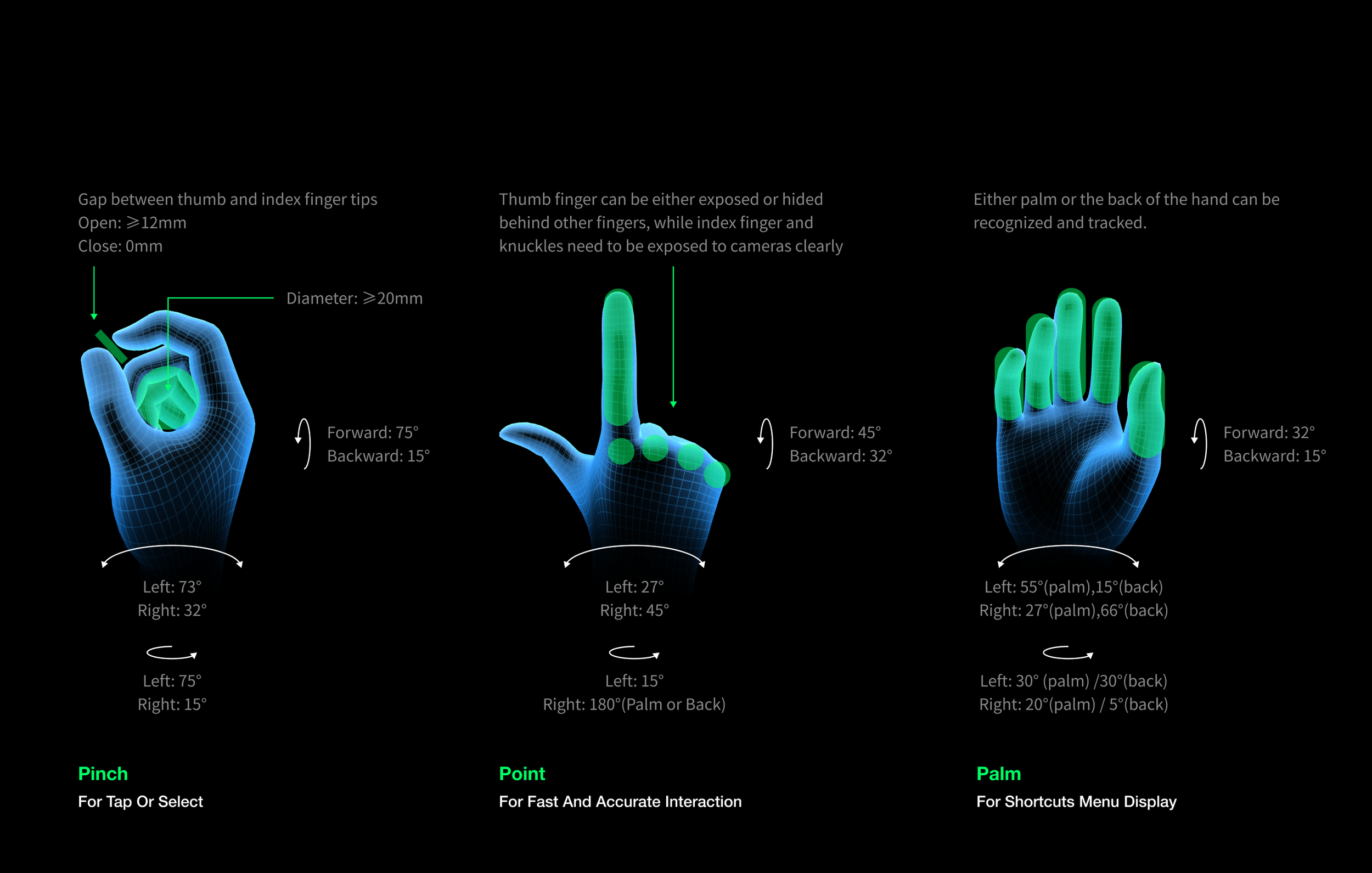

Gestures

To simplify the overall usability, only 3 gestures are used.

Pinch: pinch with thumb and index finger, to perform tap or select

Point: point with index finger, for more accurate interactions

Palm: for Shortcut Menus display

All gestures should be able to recognized and tracked continuously within proper range.

According to the field test, we found people tend to put their hands in front of the XR device when they first try those devices, by displaying operating guidelines in the Shortcut Menus, users get tutorials in a much easier way.

The design described above may not be suitable for all AR glasses. Lightweight, low-power AR glasses often rely on a single RGB camera, which is insufficient for generating detailed depth maps. To accommodate a wider range of use cases, these cameras may compromise on other features, leading to challenges in real-time hand tracking.

For such devices, we employ a simplified hand pose detection method that doesn't require continuous palm tracking. Additionally, for AR glasses with limited field of view and IMU performance, we adopt a simplified interaction method based on waving gestures. To avoid false positives caused by head movements or fast-moving objects, gesture recognition is temporarily disabled when the device detects significant body rotation.

Eye Tracking

From the early stages of our AR glasses project, we recognized the value of eye tracking technology. It is not only a core technology for critical XR features such as foveated rendering, automatic pupillary distance measurement, and iris recognition but also plays a crucial role in providing natural interactions and understanding user visual attention. LLVISION's eye tracking solution was sourced from a third-party OEM, while we were responsible for specifying the technical requirements of the eye tracking module based on user research and comparisons of various solutions.

Over the past few years, we have conducted in-depth evaluations of solutions from various manufacturers, developing engineering prototypes for each:

MEMS-based solutions (represented by Adhawk): While compact and low-power, they suffer from poor shock resistance, high cost, and low accuracy.

RGB camera-based solutions (represented by Tobii and 7invensun): These solutions have larger sizes, higher power consumption, lower sampling rates, and poor resistance to ambient light glare. The module's placement can also significantly impact the overall design. However, the technology is relatively mature, and the accuracy is sufficient for precise data collection and interactive operations.

Infrared sensor-based solutions (represented by Inseye): These solutions are compact, low-cost, low-power, highly accurate, and have high sampling rates. They are also less susceptible to ambient light interference. However, the overall technology maturity is lower compared to the previous two.

≤1° Error

For accurate hover/click interaction and foveated rendering

≥1000Hz Sampling Rate

To detect microsaccades

≤2x2 mm Packaging Size

To minimize the impact on product appearance

≤50mw

For usable power consumption on lightweight devices

As of January 2024, when the project concluded, we believe that eye tracking technology is still unable to generate large-scale commercial value in the enterprise market, especially for OST (Optical See-Through) AR devices. There are several challenges that need to be overcome, including low accuracy, high power consumption, large size, insufficient slip compensation, susceptibility to ambient light, high latency due to low sampling rates, and the inability to filter out micro-saccades, not to mention that each user needs to perform a personalized calibration when wearing the device. However, it can be used as a data collection terminal in research and corporate knowledge base construction, especially for recording work methods and evaluating work efficiency and quality.

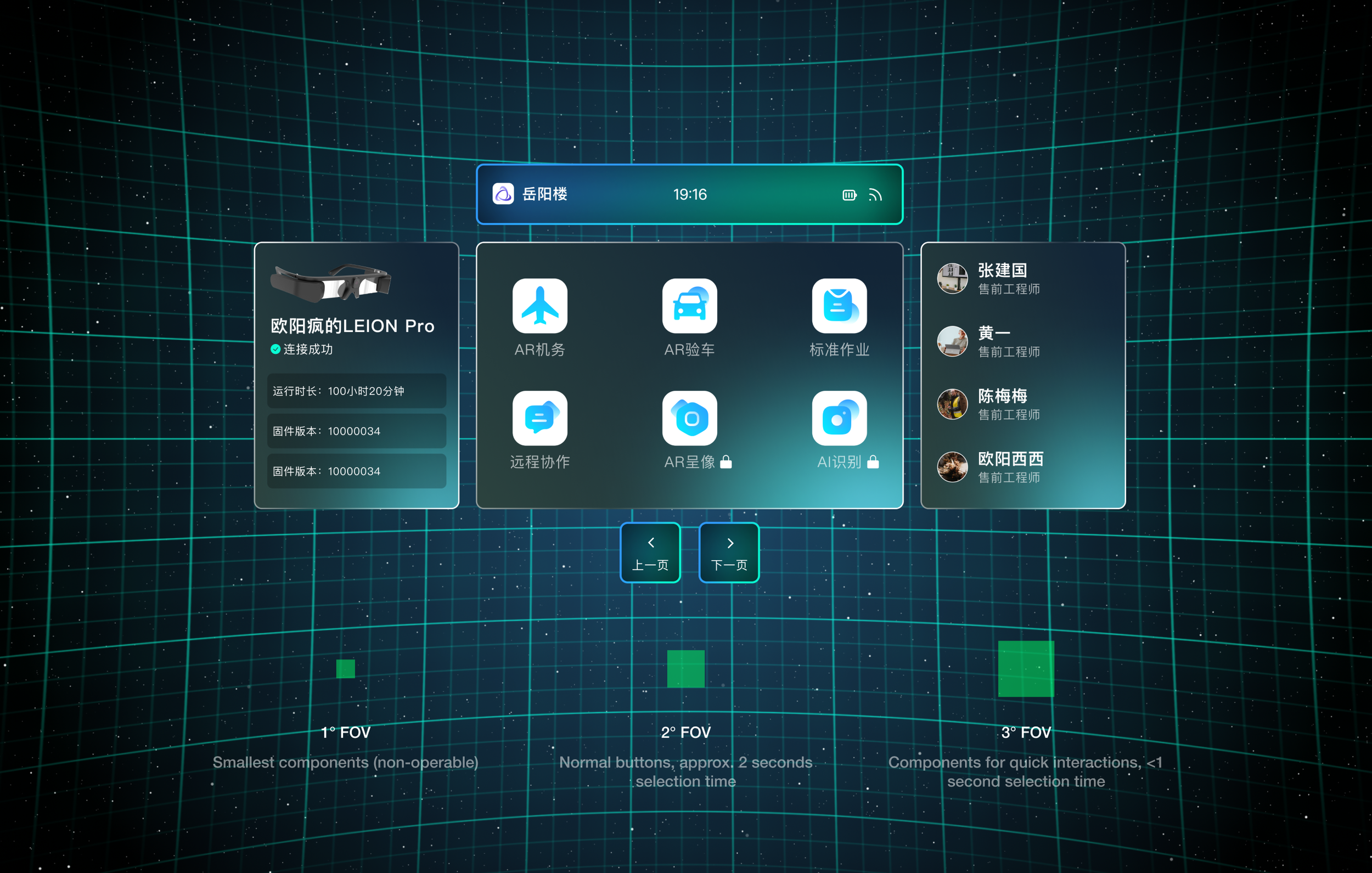

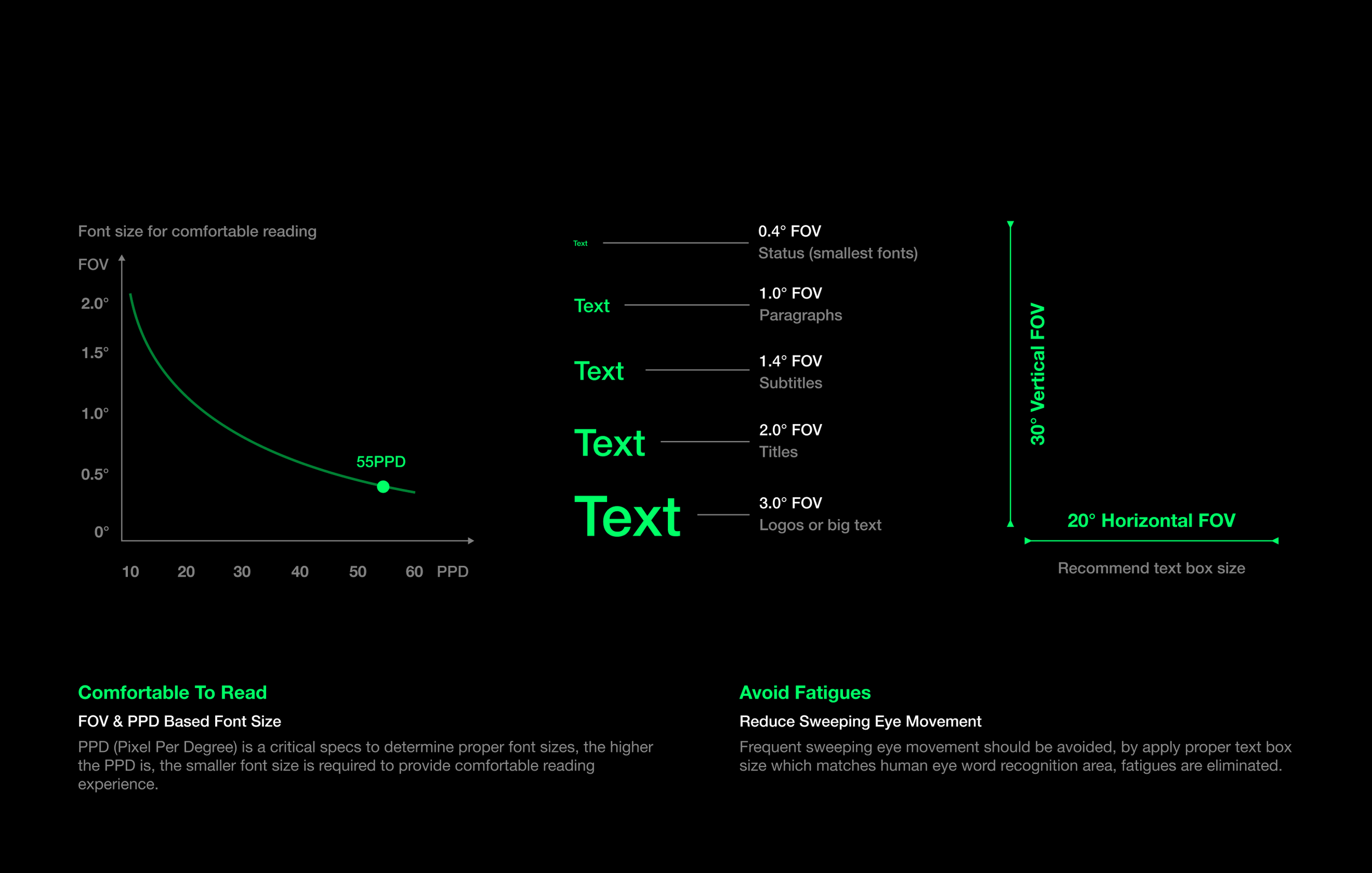

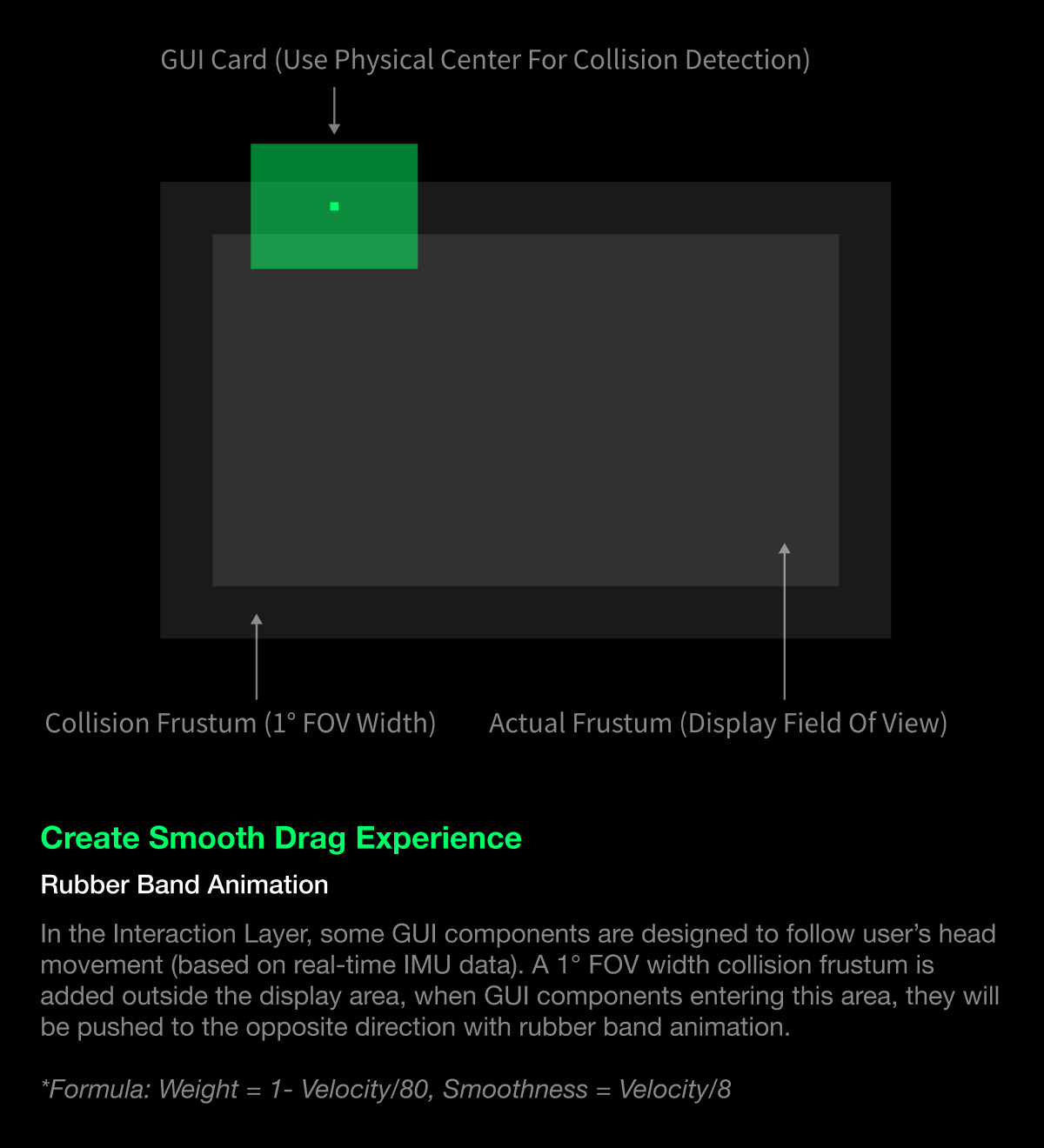

Use FOV Instead of Pixels

Unlike mobile GUIs, XR works in a different way. We use FOV (Field Of View) instead of pixels, to establish design guidelines, because it represent sizes accurately all the time.

For any buttons or icons need to be operated quickly, their width should be larger than 3° FOV, while normal ones are 2° FOV. Any components require accurate viewing should not be smaller than 1° FOV width.

Font sizes are different story - the higher the display PPD (Pixel Per Degree) is, the smaller font size can be used for comfortable reading. However, the text box area is recommended to be limited within 20°x30° FOV, as this is the reading area requires no significant eye movement, fatigue and be avoided.

Make It Smooth And Fluid

To create smooth and fluid interaction experience, a low MTP (Motion To Photon) latency is required. We optimized the time warp algorithm by submitting V-Sync as late as possible, so the rendered image matches user’s latest pose more accurately.

We tested Microsoft MRTK on LEION Ultra, it shows very low MTP latency.

Note: Color error in the video is caused by shooting directly through diffractive waveguide optics.

GUIs

Cards

A group of contents (texts, images, videos, icons) are placed in one single card. Use multiple cards to display different types of information.

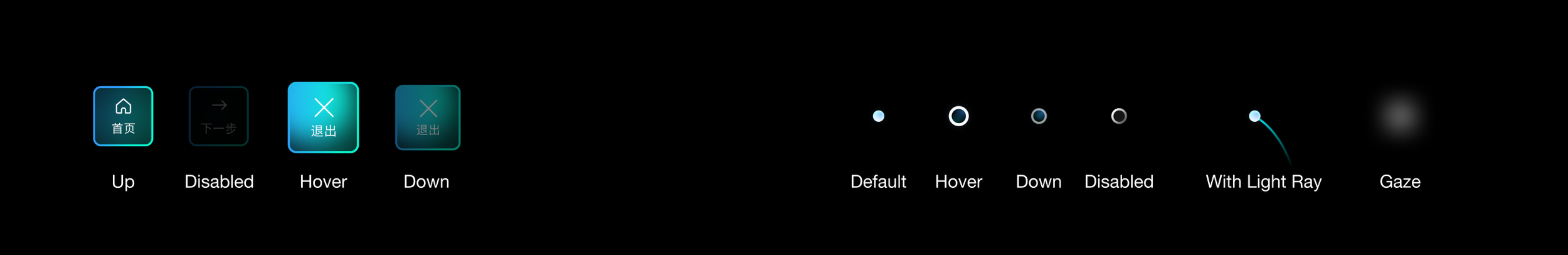

Buttons

Button changes its appearance (size, alpha and glow effect) according to current status.

Cursor

When necessary, use Light Ray to build up visible connection between GUI and user. The curved ray has a -5° tilting angle and gradually disappeared tail, which offers intuitive pointing experience to the user.

*Stabilization algorithm is required for the cursor